Introduction

Immediately following the first clear definitions of Generative Engine Optimization (GEO), the SEO world went into overdrive. First came denial. Then came the reframing: GEO is just SEO with a new name. And finally, came the admission: there were real gaps, followed by a predictable response: most SEO platforms rushed to bolt “AI features” onto their existing platforms. The issue is that they remain focused on SEO, offering very few solutions that address the actual requirements of GEO.

At the same time, GEO platforms emerged with a different kind of energy: confidence, momentum, and a sense that they had discovered a brand-new problem with brand-new rules. Many of them positioned GEO as something separate from SEO, sometimes even as an SEO killer! In practice, most GEO platforms treated SEO as irrelevant and ignored years of proven lessons, signals, and workflows that still matter when you want to show up inside AI answers.

How SEO and GEO Drifted in Opposite Directions?

The result is not a winner and a loser. It’s a fracture. We need to acknowledge the gap. We’ll unpack why modern optimization needs both SEO and GEO, and why this is exactly what most platforms miss. We need SEO for crawlability, indexing, and search demand. GEO isn’t valuable because it produces nice dashboards of AI citations, mentions, and visibility; it’s valuable when those signals turn into actions that improve outcomes. The real problem sits in the middle: building a bridge between SEO and GEO, since optimization touches both worlds and neither works well without the other.

Before diving deep into SEO and GEO, it is better to understand how LLMs provide responses to users. There are two main paths: a user asks a question that doesn’t require any information beyond the model’s training data, or the answer requires access to the outside world, typically through retrieval (web search, browsing, or connected sources) to gather fresh, verifiable context. In the second path, SEO and GEO become tightly coupled: SEO ensures content is discoverable and machine-readable (crawlable, indexable, well-structured), while GEO focuses on getting that content selected, trusted, and cited within AI-generated answers.

Maybe it needs up-to-date information that is newer than training data, or it is a subject that LLMs don’t have any understanding of. The key thing that platforms miss is that for both options, we need optimization! Yes, even for questions that don’t need external look-up, you still need optimization.

How does an LLM decide whether to search the web?

Before an AI ever “searches” the internet, it goes through a fast internal decision-making process. You can think of an LLM as a librarian who first checks their own memory before walking over to the shelves, or calling another library.

The process starts with query analysis. The model evaluates whether the question is about something static or something that changes frequently. Questions about fundamentals, definitions, or well-established knowledge can often be answered directly from the model’s internal representations. Questions involving freshness, such as current events, live prices, recent announcements, or fast-moving facts, immediately signal that relying on training data alone would be risky.

Next comes a confidence check. Even if the topic isn’t obviously time-sensitive, the model estimates how confident it is in the information it has. When that confidence falls below a defined threshold, the model refrains from guessing. Instead, it triggers an external retrieval step to reduce the risk of making up information that isn’t actually true or supported by real sources. This behavior is called hallucination.

What Happens Before an LLM Talks to Crawlers?

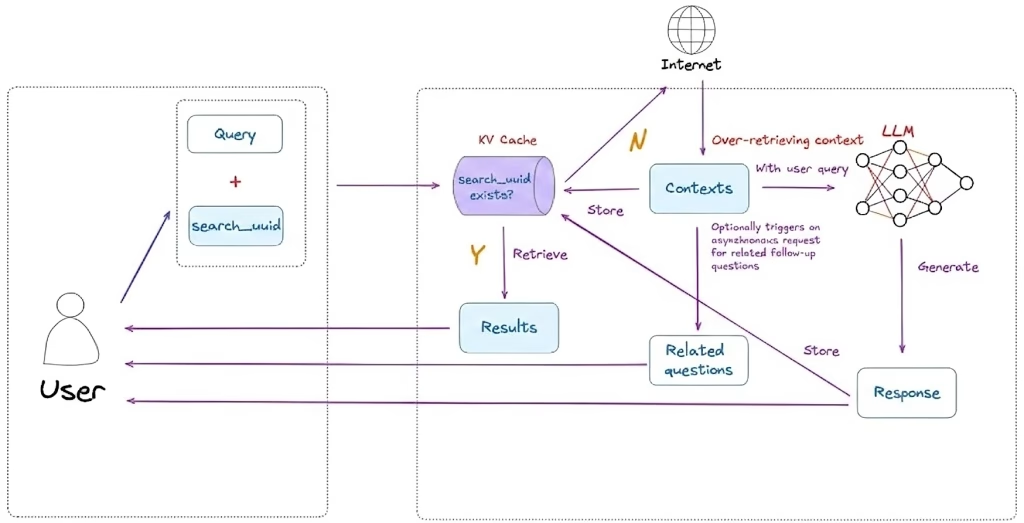

If retrieval is needed, the model doesn’t simply forward the user’s original question to a search engine. It first performs query rewriting. The conversational input is transformed into one or more structured, keyword-oriented queries optimized for search systems. This step, often called fan-out query generation, allows the model to explore multiple angles of the same question and retrieve broader, more relevant context.

Only after this pre-search phase does the model interact with crawler and retrieval infrastructure to fetch up-to-date information from the web. This design ensures two things: the model doesn’t waste resources searching for information it already knows, and when it does search, it does so with intent and precision.

To understand why optimization needs both SEO and GEO, we first need to understand what an LLM crawler actually is, and that not all crawlers serve the same purpose.

What are the different types of AI crawlers?

Not all AI crawlers exist to answer user questions. In practice, they fall into three distinct categories, each with very different implications for optimization.

AI training bots are the most resource-intensive. They systematically collect large volumes of data to train and improve foundation models. These crawlers tend to crawl deeply and repeatedly, consuming significant bandwidth and server resources. Examples include GPTBot (OpenAI), ClaudeBot (Anthropic), and Meta-ExternalAgent (Meta). Their primary goal is model development, not real-time visibility.

AI indexing bots are closer to traditional search crawlers. They navigate and index web content to build structured knowledge bases that support AI-powered search and answer generation. While similar in spirit to classic search engine indexing, they are optimized for downstream AI use. Examples include OAI-SearchBot (OpenAI), Claude-SearchBot (Anthropic), and PerplexityBot (Perplexity AI).

AI retrieval bots operate on demand. They are activated in real time when an AI system needs specific information to answer a user query. These bots make targeted requests rather than broad crawls and are directly tied to response generation. Examples include ChatGPT-User, Claude-User, and Perplexity-User, which fetch live content when the model determines it cannot rely solely on its internal knowledge.

What the crawler numbers really tell us and what they don’t?

According to Vercel’s 2025 research on AI crawler traffic, the volume of automated fetches across modern AI systems is already massive. In just the past month:

- Googlebot recorded approximately 4.5 billion fetches across Search and Gemini

- GPTBot generated 569 million fetches

- Claude-related crawlers accounted for 370 million fetches

- AppleBot reached 314 million fetches

- PerplexityBot logged 24.4 million fetches

At first glance, these numbers suggest explosive growth in LLM-driven web activity. But taken at face value, they can be misleading. We’ve already seen that not all crawlers are used for the same purpose. These figures don’t clearly distinguish between training, indexing, and retrieval activity, nor do they tell us which requests directly influence AI-generated answers versus long-term model learning.

There’s another important nuance: Google largely reuses the same crawler infrastructure for both traditional search indexing and Gemini-powered web retrieval. That means the act of “searching the internet” for an AI answer doesn’t necessarily look different at the crawl level; it’s the decision logic and downstream use of that data that changes.

Crawlers didn’t change. The rules to use the content did.

Despite all the hype around AI-driven search, one thing hasn’t fundamentally changed: the way crawlers discover content on the web still follows classic SEO principles.

Google is explicit about this. According to its documentation on AI features, appearing in AI-powered experiences relies on the same foundational SEO best practices used for traditional search. Pages must meet Google’s technical requirements, follow Search policies, and focus on creating helpful, reliable, people-first content. In other words, if a page isn’t crawlable, indexable, and understandable, it won’t make it into AI features either.

What Crawlers Still Expect From Your Site?

The same applies to the practical mechanics of crawling. Ensuring access through robots.txt, avoiding CDN or hosting blocks, maintaining strong internal linking, providing good page experience, and exposing core content in clean textual form are still mandatory. Structured data must align with visible content, media should support, not replace, text, and business metadata needs to be accurate and up to date. These aren’t optional optimizations; they’re the price of entry. Another reason SEO fundamentals still matter is practical: most AI crawlers are still inefficient, and that inefficiency shows up directly in your infrastructure costs.

Vercel’s analysis highlights how much crawler traffic is effectively wasted, ChatGPT spends roughly a third of its fetches on 404 pages and a meaningful additional share following redirects, with Claude showing similar patterns. A deeper look suggests these bots frequently probe outdated URLs (for example, old /static/ assets), which points to immature URL selection and validation strategies. At this stage, crawlers often over-retrieve information. They collect broadly and defensively, preferring to gather too much context rather than risk missing something important. That behavior hasn’t changed with AI. Up to this point, SEO alone is enough.

But this is where the old playbook stops working.

Being crawled, indexed, and even ranked no longer guarantees that your content will be used, selected, or cited inside AI-generated responses. Once the content is retrieved, a different set of mechanisms takes over, ones that SEO was never designed to influence. This is the moment where optimization shifts from discovery to utilization.

Welcome to the GEO era.

How does Generative Engine Optimization fulfill the optimization?

Now imagine you have perfect SEO. Your site is healthy, crawlable, and fully indexed. Everything works exactly as it should. And yet, that alone is no longer enough. Your content still needs to be meaningful to an LLM.

Even with perfect technical SEO, your content still has to be discovered, and most crawlers discover new URLs through links, internal links, and backlinks. Links don’t just help navigation; they help crawlers find your pages faster and add credibility by pointing to sources that corroborate what you’re saying.

That’s also why distribution is part of optimization. Social posts, community sharing, and word of mouth accelerate discovery, and this is something a real GEO platform should account for, not treat as “marketing” outside the system.

To complete the visibility journey, we have to look at the bridge between the search engine’s index and the AI’s final response. SEO gets you into the library. GEO ensures the librarian actually picks up your book and quotes it to the reader.

Winning this AI selection process requires moving beyond keywords and into semantic relevance. Once a crawler has fetched your content, the LLM performs a high-speed evaluation to decide whether that content is a suitable ground-truth source. This process begins with embedding similarity.

How does query fan-out affect content retrieval?

Because modern LLMs rely on query fan-out, generating multiple hidden sub-queries behind a single user question, your content must be semantically dense, meaning it packs a high amount of clear, specific, and directly relevant information per paragraph (with strong entities, definitions, relationships, and concrete details) so it can match many related sub-questions and be safely selected and cited. Headers and paragraphs should naturally mirror the logical sub-questions an AI would ask when researching the topic. When this alignment exists, your content’s vectors closely match the model’s internal search intent, increasing the likelihood of selection.

Alignment alone isn’t sufficient. The model then applies an accuracy and quality filter. Modern LLMs are explicitly trained to prioritize factuality, clarity, and signal density over marketing language or filler. During the synthesis phase, the model extracts high-signal “chunks” of information. Content written with clear, declarative statements, well-scoped explanations, and expert-level detail is statistically more likely to be selected and cited.

The structure that gets your content discovered is largely SEO, while the similarity that gets your content used is GEO. SEO shapes how crawlers find, parse, and prioritize your pages. GEO shapes how models match, trust, and quote your content once it’s retrieved. The real optimization is connecting these two layers into one system instead of treating them as separate worlds.

To guide this process more directly, GEO introduces control over the front door of your site through an llms.txt file. Similar in spirit to robots.txt, this markdown file acts as a high-density reference layer for generative engines. Placed at the root of your site, it highlights critical pages, authoritative resources, and structural context, allowing models to understand what matters most without parsing messy HTML or complex client-side logic.

Finally, GEO requires navigating what I call the training trade-off. A common reaction is to block all AI crawlers to “protect” content. But if you want your brand to be something an AI knows, not just something it occasionally retrieves, you must allow training bots access to a controlled subset of your core content. SEO ensures Googlebot indexes your live, up-to-date pages for retrieval. GEO ensures your brand, concepts, and authority become part of the model’s long-term internal understanding.

When these two worlds are connected, visibility doesn’t stop at indexing.

Your content isn’t just found, it’s understood, selected, and cited.

Conclusion

In Gander, we look at the entire journey, from being discoverable to being cited. Visibility doesn’t begin at the AI answer, and it doesn’t end at indexing. It starts with crawlability and discovery, continues through retrieval and semantic evaluation, and only then reaches selection, synthesis, and citation. SEO covers the first part of that pipeline. GEO addresses the last. What’s been missing is the connective layer that explains how signals flow between them.

Gander exists to close that gap. As a complete AI analytics tool, it connects SEO signals, crawler behavior, retrieval patterns, and AI response outcomes into a single system. Instead of optimizing in silos, teams can finally see how their content moves from the open web into AI-generated answers.

About the Author: Mehrad Soltani

Mehrad Soltani is an AI Engineer at Gander, where he works on AI analytics and insight-generation systems that analyze how brands and content appear across AI-driven search and generative platforms.