Does publishing date-stamped content actually increase your visibility in AI search?

Most GEO research answers this question by measuring citations, the links that appear in the final AI response. We measured the retrieval layer, the websites LLMs visit while composing those responses (also called sources).

An LLM often reads five fresh review articles to triangulate an answer, then cites the brand’s official homepage in its output. If you only measure citations, you miss opportunities for optimization.

We analyzed unique sources across ChatGPT, Google AI Overviews, Gemini, and Perplexity during Q1 2026, measuring both the retrieval layer and the final citation response.

Here is what we found.

Summary

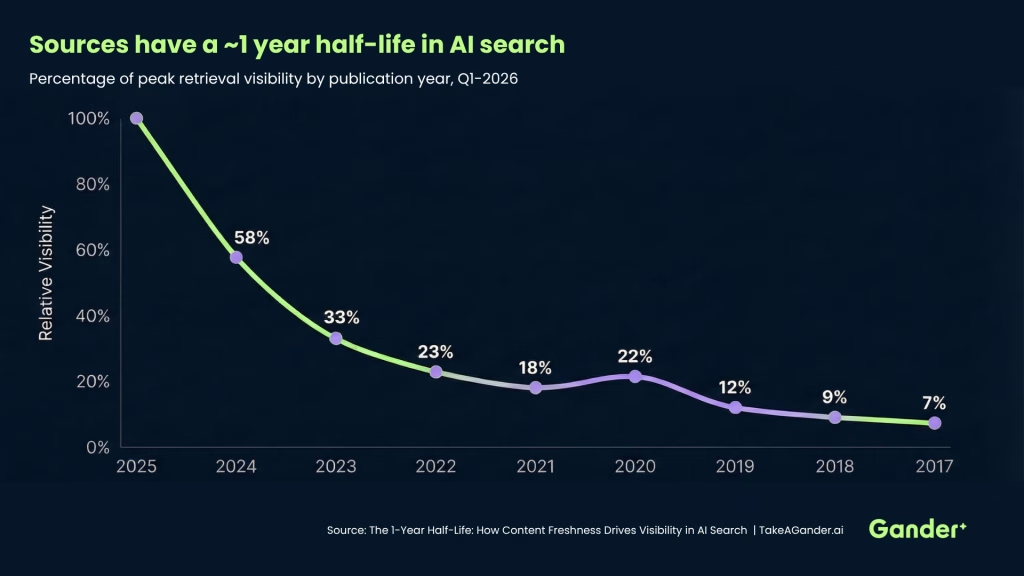

- Content has a 1-year half-life in AI search. Each year of age reduces a page’s visibility by roughly 40-60%. Two-year-old content operates at 33% of current visibility. Three-year-old content is below 25%.

- The AI adds dates to searches on its own. In 23.4% of the fanout queries ChatGPT ran behind the scenes, it injected a year into its search, even when users did not include one. It targets the most recent available data, not necessarily the current calendar year.

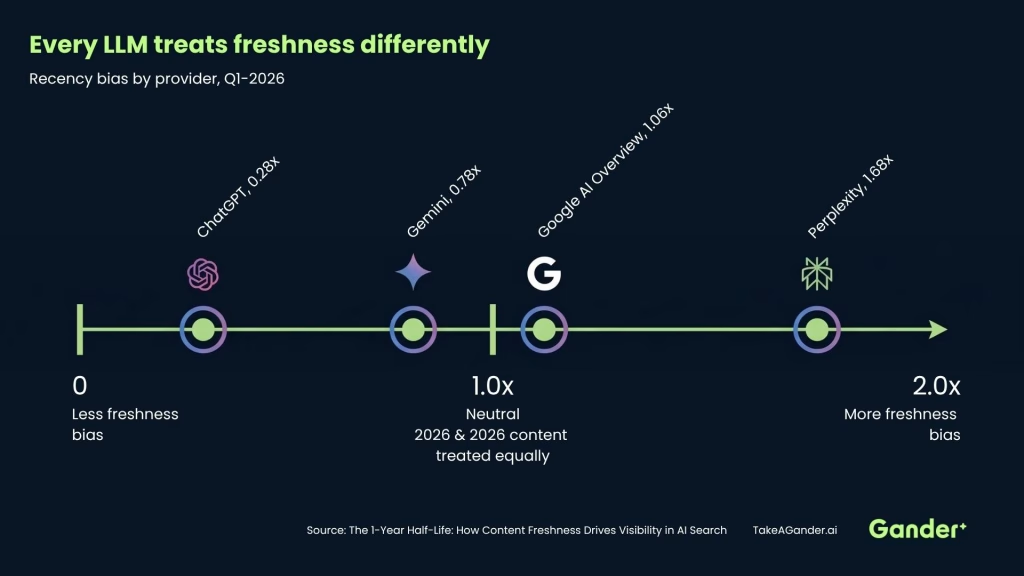

- Provider architecture determines how much freshness matters. Perplexity shows a 1.69x bias toward current-year content. Gemini shows almost none (0.78x). Where your audience reads AI responses determines how much freshness matters for your strategy.

- Fresh content shapes AI answers even without earning the citation. The retrieval layer (what the AI reads) is far more recency-biased than the citation layer (what the AI shows). Publishing fresh content directly influences the AI’s conclusions.

Why does this matter?

Content freshness has become one of the most studied variables in AI search visibility. Before presenting our data, it’s worth situating these findings within the broader body of research.

Ahrefs’ analysis of 17 million AI citations found that content cited by AI assistants is approximately 25.7% fresher than content appearing in traditional Google search results. Passionfruit’s research confirms this at the page level: 76.4% of ChatGPT’s most-cited pages had been updated within the last 30 days.

The effect is not subtle. Seer Interactive’s study across 5,000+ URLs found that 65% of AI bot hits target content from the past year, 79% from the last two years, and 89% from the last three. Kevin Indig’s State of AI Search Optimization 2026 report quantifies the penalty for neglect: pages not updated quarterly are 3x more likely to lose their citations entirely.

There is also direct code-level evidence. Researcher Metehan Yesilyurt reverse-engineered ChatGPT’s production search configuration and found [use_freshness_scoring_profile: true] in the reranker settings. Freshness is not an emergent behavior. It’s an explicit scoring signal.

Perhaps most revealing is the Waseda University study (published at ACM SIGIR 2025), which tested 7 LLMs by prepending artificial publication dates to passages. “Fresh” passages were consistently promoted, with individual items shifting by as many as 95 rank positions and pairwise preferences reversing by up to 25% after date injection. The bias was present in every model tested, though larger models attenuated the effect.

Our study adds a dimension most of this research does not cover: the internal retrieval layer. Most studies measure what AI engines cite. We measured what they read.

How fast does content age out of AI search?

Out of total unique URLs that AI engines pulled into their retrieval layer during Q1 2026, 17.4% contained a year in the URL path. Among those dated URLs, recent content dominated:

The sharpest drop is the most recent: 2025 to 2024 loses 42%, and 2024 to 2023 loses another 43%. By 2021, a page operates at roughly 18% of peak visibility. Content loses approximately half its AI retrieval visibility with each passing year.

This aligns with external data. ConvertMate’s analysis of 80 million+ citations found that content updated within 30 days receives 3.2x more AI citations. Rank.bot’s research states that most LLM citations occur within 2-3 days of publishing, decaying from a 2% citation rate at peak to 0.2% after six months.

There is one exception worth noting. Content from 2020 appears at a higher rate than 2021 (0.22x vs 0.18x). The most likely explanation is the volume of COVID-era government and institutional content that remains in active retrieval.

Actionable Takeaway: If you operate in an industry that relies on annual data (legal rankings, university employment reports, product reviews), ensuring URL slugs and title tags have the current year is a baseline requirement for AI retrieval visibility.

Does 2026 content already dominate AI results?

You might expect “2026” content to dominate in Q1 2026. It does not.

2025 is still the peak retrieval year, appearing at 2.56x the rate of 2026 content:

Year | URLs | % of Dated | Rate vs 2025 |

2026 | 3,805 | 11.3% | 0.39x |

2025 | 9,722 | 28.8% | 1.00x |

2024 | 5,614 | 16.7% | 0.58x |

Only 11.3% of dated URLs belong to 2026, compared to 28.8% for 2025. This is not because AI engines prefer old content. It’s because the web has not yet populated with 2026-dated pages. Most annual publications, industry rankings, and “best of” roundups have not released their 2026 editions by March.

For content strategists, this creates two implications:

- Q1 is a grace period. Your 2025 content retains full retrieval value for approximately three months into 2026. There is no cliff on January 1.

- Early publishers win. If you are first to publish “Best of 2026” or “2026 Guide” content in your category, you capture outsized retrieval traffic while competitors are still running on prior-year content. The AI is already looking for 2026 content. There is just very little of it to find.

Does the AI add dates to its searches on its own?

Yes, and the evidence for this is now overwhelming.

The most structurally revealing data comes from the fan-out queries, which are the web searches ChatGPT runs while composing a response. Out of 23,973 fan-out queries we examined in Q1, 23.4% explicitly included a year, even when the original user prompt contained no year whatsoever.

This matches findings across multiple independent studies. Qwairy observed a 28.1% year injection rate across 102,018 fanout queries. Quattr found that AI systems automatically add the current year to queries, with “2026” appearing 184x more often than “2025” in generated searches. The Waseda University study confirmed the mechanism at a model level: LLMs systematically promote content with more recent dates.

Metehan Yesilyurt’s reverse-engineering of ChatGPT’s search pipeline provides the code-level explanation. The production reranker model (ret-rr-skysight-v3) runs with use_freshness_scoring_profile: true, meaning freshness is an explicit scoring input, not an accident of training data. The AI is applying its own QDF (Query Deserves Freshness) filter before it even reaches Google.

Which years does the AI actually target?

The AI does not blindly target the current calendar year. It targets the most recent available data:

Year | % of Year-Tagged Queries |

2025 | 38.6% |

2024 | 30.6% |

2026 | 19.9% |

2023 | 5.0% |

<=2022 | 5.9% |

2025 is the most frequently targeted year (38.6%), followed by 2024 (30.6%), and only then 2026 (19.9%). The model spreads its searches across the two to three most recent years based on what data is likely to exist.

How does an LLM know which year to search for?

The year targeting is not random. LLMs understand that different types of content have different publication schedules:

2026 queries target content that updates in real time:

- “best gyms in Vancouver 2026”

- “best dishwasher under $2000 Canada 2026”

- “best vacuum for pet hair 2026 Canada”

2025 queries target annual reports that have been published:

- “BMO Global Asset Management AUM ‘assets under management’ 2025”

- “CPP Investments assets under management ‘as of’ 2025”

- “best HIIT studios Toronto 2024 2025 list”

2024 queries target data with known publication lags:

- “OMERS assets under management ‘as of December 31, 2024′”

- “Forrester Wave data resiliency 2024”

The model is doing exactly what a competent analyst would do: search for the most recent published data. For consumer content like gym reviews, that means 2026. For fiscal year financial reports, that means 2024 or 2025. For legal rankings that publish annually, that means whatever edition is most recent.

Actionable Takeaway: If you produce annual publications (financial reports, industry rankings, salary surveys), the AI will find and retrieve your most recent edition regardless of what year it was published. Publish as early in the year as possible to capture retrieval traffic before competitors release their editions.

Which LLMs care most about freshness?

Not all LLMs treat freshness equally. The provider your audience uses determines how much freshness matters for your strategy.

Recency Ratios (2026:2025)

Provider | Ratio | Interpretation |

Perplexity | 1.69x | Already favoring 2026 content despite limited availability |

Google AI Overviews | 1.06x | Roughly balanced between 2026 and 2025 |

Gemini | 0.78x | Minimal freshness preference |

ChatGPT | 0.28x | Heavily retrieves 2025 (reflects content availability, not bias) |

These rankings are consistent with Averi.ai’s analysis of 680 million citations, which found that Perplexity weights freshness at 40% of its ranking signal, while ChatGPT prioritizes referring domains at 30% and Gemini focuses on E-E-A-T signals at 35%. Each platform has a distinct retrieval personality.

Perplexity shows the most extreme recency bias. Even with limited 2026 content available in Q1, it retrieves current-year pages at 1.69x the rate of prior-year pages. Perplexity’s real-time retrieval architecture aggressively surfaces the newest available content. Quattr’s research confirms this independently: approximately 50% of Perplexity’s citations come from content published or updated in the current year alone.

ChatGPT has the lowest ratio (0.28x), but this is not a lack of freshness preference. ChatGPT produces the most sources (142,970 unique) and runs extensive web searches that retrieve whatever is most available. In Q1, 2025 content is simply more abundant than 2026. ChatGPT’s dated URL share percentage is the highest of any provider at 19.9%, indicating it engages heavily with date-stamped content. Passionfruit’s data supports this: 76.4% of ChatGPT’s most-cited pages had been updated within 30 days.

Google AI Overviews sits in the middle (1.06x). It also shows a notable 2020 anomaly with 447 dated URLs, likely connected to government and institutional pages from the pandemic era. According to The Digital Bloom’s 2026 report, AI Overviews now appear on roughly 48% of tracked queries, up 58% year-over-year.

Gemini is the least recency-biased (0.78x). Its architecture does not prioritize freshness. If your audience primarily encounters your content through Gemini, freshness optimization has the lowest marginal return.

Actionable Takeaway: If your traffic comes from Perplexity or ChatGPT, publish early in the year with the current date. If it comes from Gemini or AI Overviews, domain authority and content depth still outweigh freshness.

Can fresh content influence AI answers without being cited?

Yes, and this finding reframes how to think about content ROI when optimizing for AI search.

The grounding layer (what the AI reads) is far more recency-biased than the citation layer (what the AI shows the user):

Layer | 2026:2025 Ratio |

Citation (shown to user) | 0.65x |

Source (grounding, hidden) | 0.35x |

QuerySource (search results, hidden) | 0.28x |

LLMs read heavily from the most recently-published content to inform its answer, then cites a mix of recent and authoritative pages in its final output.

This means date-stamped, opinionated review content earns influence at the retrieval layer, shaping what LLMs conclude, without earning the citation. The AI might read three “Best of 2026” review articles to determine the top CRM platform, then display the CRM’s official homepage as the cited link.

ConvertMate’s research quantifies a related dimension: ChatGPT mentions brands 3.2x more often than it provides clickable citations. The retrieval layer shapes what the AI says, but the citation layer is selective about what it links to.

Kevin Indig’s research identifies the same dynamic from the strategy side, distinguishing between retrieval layer, citation layer, and trust factors as three separate determinants of LLM visibility. Optimizing for one does not guarantee the others.

For content strategy, grounding influence and citation credit are separate optimization targets. A strategy that conflates the two will optimize for the wrong layer.

What about the 83% of sources without a year?

Before restructuring your entire content calendar around dates, the scope needs to be clear.

Of the total unique sources in this dataset, 82.6% contain no year in the link. Freshness in any given source is a signal that applies to roughly one in five sources an LLM touches.

That majority competes on a different set of signals entirely: semantic relevance, domain authority, content comprehensiveness, and citation by other authoritative sources. Averi.ai’s analysis of 680 million citations found that sites with 32K+ referring domains are 3.5x more likely to be cited by ChatGPT, and that 44.2% of all LLM citations come from the first 30% of a page’s text, suggesting content structure and comprehensiveness matter as much as freshness for undated content. Freshness is a multiplier on top of those factors, not a replacement for them.

The topic dependency is sharp:

Topic Category | Dated URL % |

Financial services (AUM, pension funds) | ~30-50% |

Legal rankings (Chambers, Legal 500) | ~40-55% |

Education (MBA employment reports) | ~20-35% |

Consumer products (reviews, roundups) | ~10-20% |

Local services (daycare, recycling) | ~0-5% |

Seer Interactive saw this play out in practice: one client saw +300% AI traffic after refreshing outdated content, but the gain was concentrated in categories where freshness was part of the query intent. Local services and evergreen reference content barely moved.

Editor’s note: Duane Forrester’s article about content’s missing middle, corroborates citation and extraction patterns describe in this section.

How do you signal freshness without changing your URL?

This study measures year-in-URL as a freshness proxy, but that is only one signal AI retrieval systems and web crawlers can read. For the 83% of URLs that do not carry a year in the path, there are three other ways to signal recency:

- dateModified in Schema markup: Adding schema property “dateModified”: “2026-01-15” tells crawlers exactly when the content was last updated, without any URL change.

- lastmod in XML sitemaps: Signals which pages have been recently updated, directly influencing crawl frequency and how quickly refreshed content gets re-indexed.

- HTTP Last-Modified response headers: A server-level signal indicating when the resource was last modified, used by crawlers as a low-level freshness cue.

These mechanisms allow evergreen pages to accumulate freshness signal over time without sacrificing the link equity and ranking stability of an established URL.

Actionable Takeaway: Freshness is a multiplier on existing domain authority, not a replacement for it. For high-value evergreen pages, implement Schema property dateModified and update lastmod in your sitemap every time you make meaningful content revisions.

Conclusion

The data points to a model of AI visibility that is more nuanced than “publish fresh content and rank.” Freshness is real, it’s measurable, and it’s steep, but it operates on a specific slice of the content landscape, through a specific mechanism, and only for certain AI architectures.

AI search has two separate visibility layers, retrieval and citation. The retrieval layer is where an LLM does its research. It’s recency-biased, architecture-dependent, and largely invisible to the end user. The citation layer is what the user actually sees. It favors authoritative, evergreen pages.

For practitioners, consider:

- If your industry produces annual data (rankings, reports, salary surveys), date-stamped URLs with current-year slugs are a hard requirement for retrieval-layer visibility. The 1-year half-life means each year without an update costs 40-60% of AI visibility.

- If your audience uses real-time retrieval engines (Perplexity, ChatGPT), recency is your primary competitive variable. Publish early in the year with the current date.

- Q1 is a strategic window. The prior year’s content retains full retrieval value for the first three months of a new year. First-movers who publish current-year content earliest capture outsized retrieval traffic.

- If your content is evergreen, invest in semantic freshness signals (dateModified, lastmod) and the kind of authoritative depth that survives the freshness filter entirely.

Freshness is not replacing authority in AI search. It compounds with it. The highest-visibility content in our dataset was recent and authoritative. The lowest-risk long-term strategy is building toward the same.

Appendix: How does this compare to our December 2025 baseline?

We first measured content freshness in AI search during a 12-day window in December 2025. The Q1 2026 dataset is 13x larger. Comparing the two windows validates whether the findings above are durable or seasonal.

Is the decay curve stable across measurement windows?

The decay from 2025 backward is comparable across both windows:

December 2025 | Q1 2026 |

2025: 1.00x | 1.00x |

2024: 0.62x | 0.58x |

2023: 0.25x | 0.33x |

2022: 0.18x | 0.23x |

2021: 0.09x | 0.18x |

The 2025-to-2024 drop is 42% in Q1 2026 versus 38% in December. The curve shape is consistent: steep initial decay, flattening into a long tail. This confirms the half-life as a structural property of AI search, not an artifact of one measurement window.

Did the provider rankings change?

Provider | Dec 2025 (2025:2024) | Q1 2026 (2026:2025) |

Perplexity | 5.14x | 1.69x |

ChatGPT | 1.68x | 0.28x |

Google AI Overviews | 1.62x | 1.06x |

Gemini | 1.04x | 0.78x |

Every provider’s Q1 ratio is lower than December. This is primarily the Q1 lag effect (less 2026 content exists), not a behavior change. The rank order is preserved, with Perplexity being the most biased, Gemini the least.

How did fan-out query behavior shift?

Metric | December 2025 | Q1 2026 |

Year injection rate | 29.6% | 23.4% |

Current-year queries (% of tagged) | 53.0% | 19.9% |

Prior-year queries (% of tagged) | 43.6% | 38.6% |

The injection rate dropped modestly. The bigger shift: in December, the AI favored the current calendar year (53% of tagged queries). In Q1 2026, it distributes across 2025 (38.6%), 2024 (30.6%), and 2026 (19.9%), reflecting awareness that 2026 editions of most publications do not yet exist.

Key metrics side by side

Metric | December 2025 | Q1 2026 |

Unique URLs | 14,764 | 194,077 |

Dated URL share | 12.1% | 17.4% |

Half-life (peak -1yr decay) | 0.62x | 0.58x |

Year injection rate | 29.6% | 23.4% |

Peak year = calendar year | Yes | No (Q1 lag) |

References

1. Ahrefs, Fresh Content: Why Publish Dates Make or Break Rankings and AI Visibility — AI-cited content is 25.7% fresher than traditional search results, based on analysis of 17 million citations.

2. Qwairy, Content Freshness & AI Citations Guide (2026) — AI systems inject the current year into 28.1% of sub-queries automatically. Analysis of 102,018 AI-generated queries.

3. Sight AI, Essential Content Freshness Signals For SEO: Best Tips — Covers technical freshness signals (last-modified headers, XML sitemap lastmod, HTTP caching) and their impact on crawl frequency.

4. Seer Interactive, Study: AI Brand Visibility and Content Recency — 65% of AI bot hits target content from the past year; 79% within two years. One client saw +300% AI traffic after refreshing outdated content.

5. Fang, Tao et al. (Waseda University), Do Large Language Models Favor Recent Content? (ACM SIGIR 2025) — Tested 7 LLMs; “fresh” passages shifted by up to 95 rank positions. Pairwise preferences reversed by up to 25% after date injection.

6. Metehan Yesilyurt, The Recency Bias That’s Reshaping AI Search — Reverse-engineered ChatGPT’s production search config: use_freshness_scoring_profile: true in the reranker settings.

7. Kevin Indig / Growth Memo, State of AI Search Optimization 2026 — Content less than 3 months old is 3x more likely to get cited. Pages not updated quarterly are 3x more likely to lose citations. Distinguishes retrieval layer, citation layer, and trust factors.

8. Passionfruit, Why AI Citations Come from Top 10 Rankings — 76.4% of ChatGPT’s most-cited pages updated in the last 30 days. 40.58% of AI Overview citations pull from Google’s top 10 organic results.

9. ConvertMate, AI Visibility Study 2026 — Content updated within 30 days receives 3.2x more AI citations. ChatGPT mentions brands 3.2x more often than it provides clickable citations. Analysis of 80 million+ citations.

10. Averi.ai, ChatGPT vs. Perplexity vs. Google AI Mode: The B2B SaaS Citation Benchmarks Report (2026) — Perplexity weights freshness at 40%; ChatGPT prioritizes referring domains at 30%; Gemini focuses on E-E-A-T at 35%. Analysis of 680 million citations.

11. Rank.bot, Content Freshness: 2-3 Day Window for AI Citations — Most LLM citations occur within 2-3 days of publishing (2% rate), decaying to 0.2% after 6 months. 10x decline from peak to 6+ months.

12. The Digital Bloom, 2026 AI Citation Position & Revenue Report — AI Overviews appear on 48% of tracked queries, up 58% YoY.

13. Quattr, AI Search & Content Freshness: Why Updates Improve Visibility — ~50% of Perplexity’s citations from content published/updated in the current year. “2026” appears 184x more often than “2025” in AI-generated queries.

Edited by: Adam Malamis

About the Author: Mehrad Soltani

Mehrad Soltani is an AI Engineer at Gander, where he works on AI analytics and insight-generation systems that analyze how brands and content appear across AI-driven search and generative platforms.