Volume 1

2025 represents a period of significant transition in search marketing. In the last year, we’ve seen generative engines, like ChatGPT, Gemini, Claude, and Perplexity, secure an unprecedented amount of market share from Google.

We interviewed twenty-two search professionals to understand how the industry is responding to AI search. This report captures their insights and thoughts.

TLDR, Summary

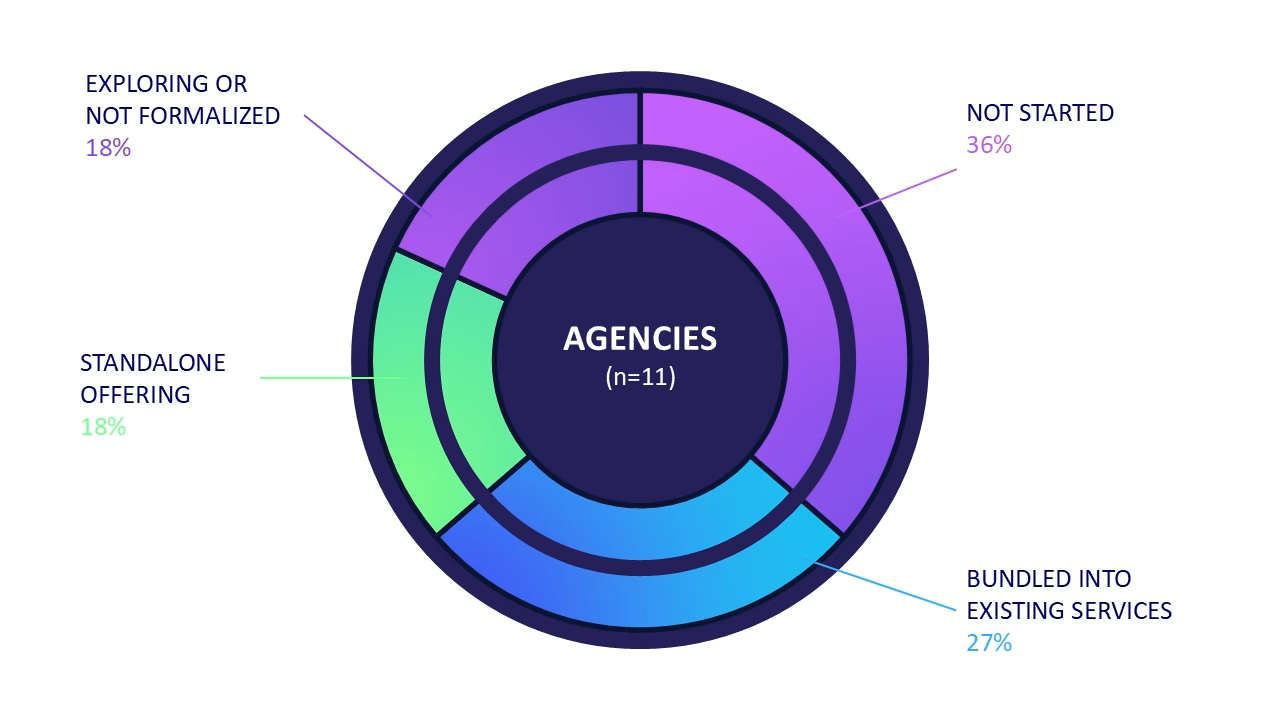

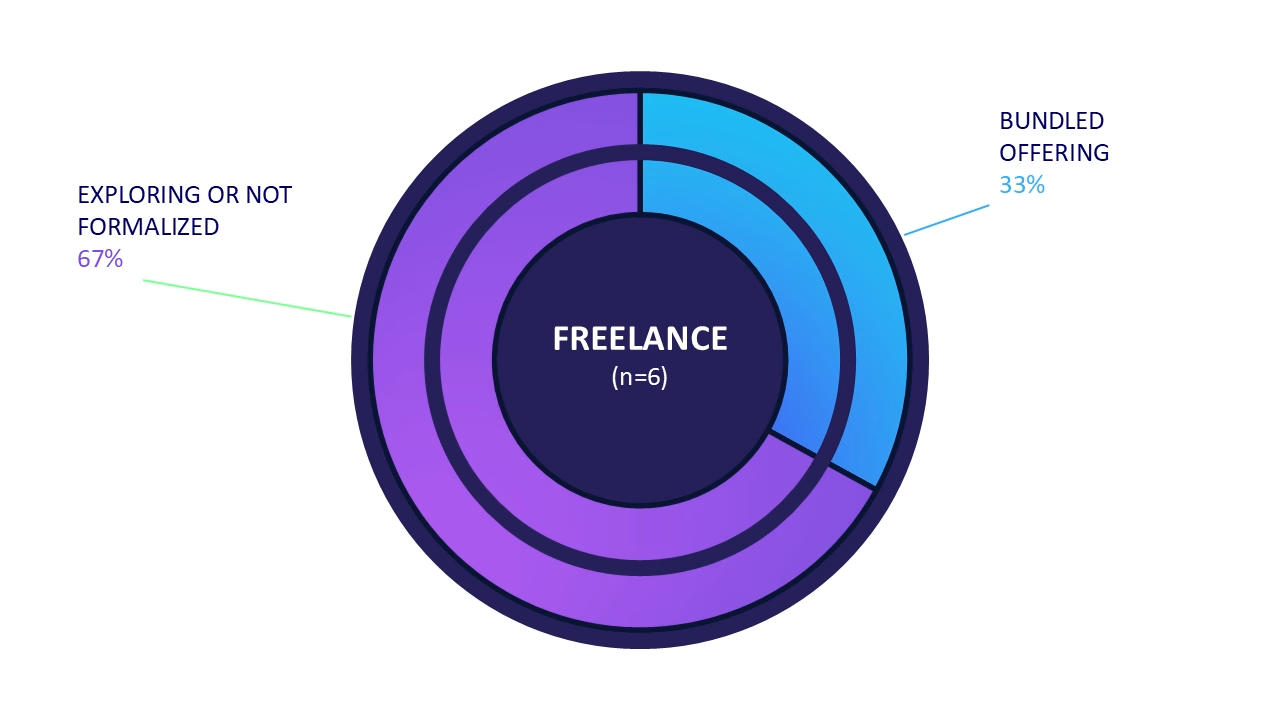

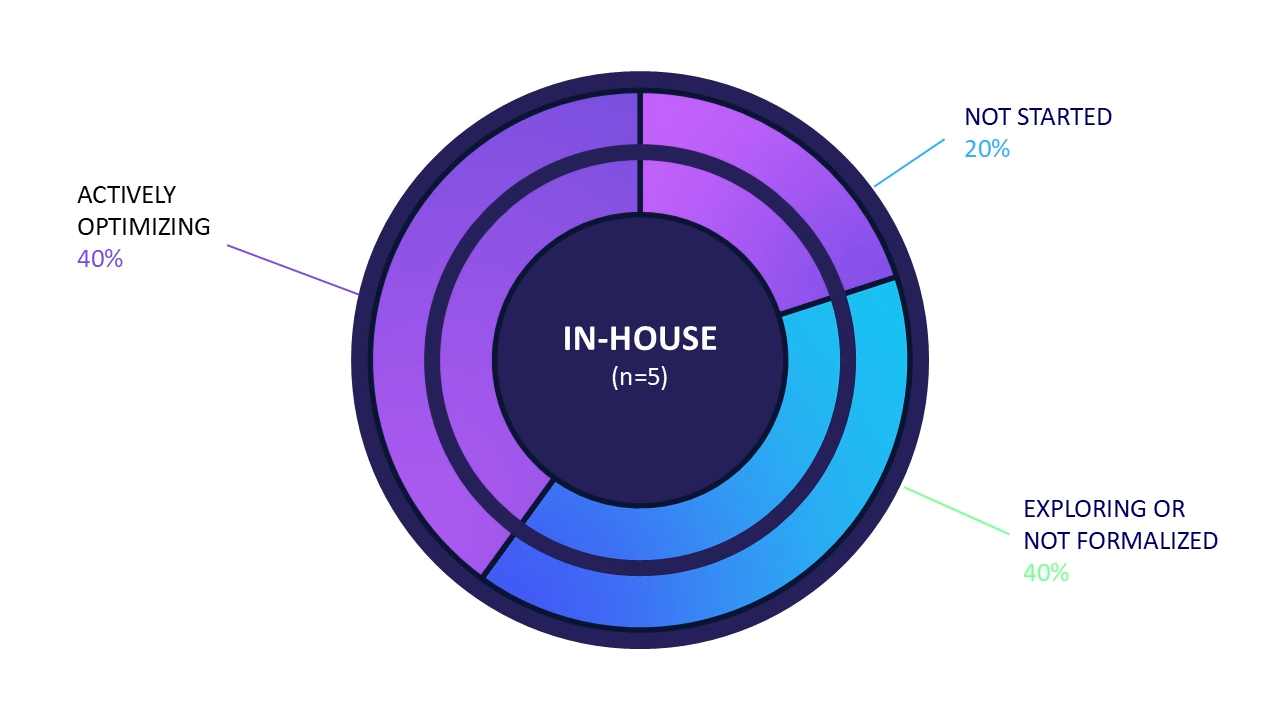

Who we talked to: 11 agencies, 6 freelancers, 5 in-house practitioners. Mix of Canadian, US, UK, and international. Client verticals are varied and are not representative of the broader whole.

Have you developed a GEO offering?

The majority of respondents haven’t formalized an offering. Agencies are split between bundling GEO into existing SEO retainers (36%) and not starting at all (36%). Freelancers are exploring but not charging separately. In-house teams call it a priority but haven’t dedicated headcount or budget.

Value-add or new line item?

Overwhelmingly value-add. Only 2 of 11 agencies charge separately for GEO. Most are using it to retain clients, not generate new revenue.

What are clients asking?

“How do we show up?” and “Why do competitors appear and we don’t?” The harder question might be “How do we become THE cited source?”. Practitioners are still figuring out how to answer. Attribution and ROI remain a gap. Clients expect GEO to be measurable like SEO, but the data infrastructure doesn’t exist yet.

What are you guessing about?

Prompt volume is universally distrusted. No one believes the data from any platform. Secondary: whether optimization efforts actually cause visibility changes.

Are you using a visibility tool?

Fragmented. Semrush most mentioned, also most criticized. Profound has traction but limits query frequency. PeecAI is viewed similarly. Many are triangulating multiple tools or relying on manual Looker Studio dashboards. No industry standard has emerged.

What’s your reporting process?

Unstandardized. Practitioners described patchwork approaches including: log file testing, GA4 referral data, incognito spot-checks. None of the respondents articulated a repeatable workflow with time estimates.

Where is the GEO narrative overblown?

“SEO is dead” is universally rejected. Self-proclaimed GEO experts are widely distrusted. Case study claims are viewed as exaggerated or unverifiable. Technical fundamentals are seen as more important than ever.

Methodology and Research Design

The findings in this report are based on a thematic analysis of twenty-two (22) qualitative interviews with search professionals (SEOs). Interviews took place between November 1, 2025 and January 31, 2026. We chose this approach to capture how SEOs are adapting to AI search while industry benchmarks are still being defined. To provide a balanced view, we categorized participants by professional grouping—agency, freelance, or in-house.

Participant Segments and Professional Classifications

We included participants from three main professional groups to cover a variety of perspectives.

- Agency (n=11)

Titles included: Director of Digital Marketing, SEO Executive/Manager, Technical SEO Specialist, EVP Digital, Partner/Founder - Independent practitioners or freelance (n=6)

Titles included: SEO Consultant, Senior SEO, Fractional CMO - Internal marketing teams (n=5)

Titles included: CMO, Senior Manager Search, Director of Marketing

Limitations

This research provides insight into industry mental models around AI search, but it has several limitations. The sample size of twenty-two participants is large enough for a thematic study, but it does not represent the views of the entire search industry. Participants skew agency-side, meaning that freelancers and in-house teams are not evenly represented.

Because we used a qualitative approach, the findings rely on the personal experiences and opinions of the experts we interviewed. The fast-moving nature of AI search means these trends may change quickly. Finally, most participants were located in Canada, the United States, and the United Kingdom, so the results may not apply to every global market.

Questions Asked

Have you developed a GEO offering for clients?

If so, how would you describe its scope? If not, what factors have stopped you from formalizing an offering so far?

The majority of practitioners have not formalized GEO as a standalone offering. Among agencies, the dominant pattern is integration into existing SEO retainers. Freelancers are split between those bundling GEO into monthly retainers and those still educating clients without a formal scope. In-house teams universally describe GEO as “a priority” but lack formal programs, dedicated headcount, or standardized processes.

Key barriers cited: time constraints, lack of proven methodology, unclear ROI proof, client education burden, and tool/data limitations.

Do you see GEO services as a value-add to retain current SEO retainers, or is this a new, chargeable line item?

Among agencies and freelancers with active or bundled GEO offerings, the overwhelming trend is including GEO services in existing SEO retainers, rather than creating new revenue streams. Only 2 of 11 agencies reported charging separately for GEO services. Freelancers universally bundle GEO into existing retainer structures, when offered at all.

Several respondents noted that charging separately is “an interesting idea” they hadn’t considered, suggesting the market hasn’t yet established GEO as a distinct billable service category. When charged separately, price points cited ranged widely from $2,000–$8,000/month for bundled SEO+GEO retainers.

In-house teams described GEO efforts as absorbed into existing marketing or SEO budgets. None reported dedicated headcount or standalone budget allocation for AI visibility efforts.

Have your clients asked you about AI search? If so, what kinds of questions are they asking? What information do you wish you had for them?

Over half of agencies report clients actively asking about AI search, driven primarily by competitive anxiety (“why do they show up and we don’t?”) and C-suite pressure to demonstrate mastery of emerging channels. Freelancers see more mixed engagement. Our cohort of freelancers almost universally mentioned that their clients are still catching up on SEO fundamentals.

In-house teams report leadership-driven interest, though often without accompanying budget or clear success metrics. The universal frustration is the inability to answer the most basic client question: “Is it working?” Without reliable prompt volume data, conversion attribution, or a “search console equivalent,” practitioners feel like they must educate clients on the limitations of measurement before they can discuss strategy. Several respondents noted a disconnect: clients expect GEO to mirror the measurability of SEO or paid media, but reliable first-party data doesn’t exist, yet.

Most Common Client Questions

Question | Frequency |

|---|---|

How do we show up / get cited in AI search? | High |

Why do competitors appear and we don’t? | High |

What’s the ROI / traffic from AI search? | Moderate |

How do we become THE cited source? | Moderate |

What should we be doing differently? | Moderate |

Are we falling behind? | Low |

Information Practitioners Wish They Had

Gap | Frequency |

|---|---|

Reliable prompt volume data | Universal |

Attribution / conversion tracking from LLMs | High |

Causal link between optimizations and citations | Moderate |

Search Console equivalent for AI search | Moderate |

Competitive benchmarks by industry | Moderate |

Standardized best practices to recommend | Moderate |

What’s the one thing you feel you’re ‘guessing’ about when clients bring up AI search?

Prompt volume emerged as the dominant uncertainty across all segments. Every practitioner who addressed this question expressed distrust in available prompt data, describing it variously as “extrapolated,” “directional at best,” or “completely made up.” Multiple respondents noted that platforms like Semrush and AHREFS are deriving prompt volumes from keyword data or clickstream—methodologies designed for traditional search that do not translate to conversational AI queries.

A secondary theme was the impossibility of proving causation. Many respondents believed that GEO lacks the feedback loop to validate whether optimizations are working or whether visibility changes are random. Several respondents noted that identical prompts yield different results across sessions due to personalization, memory, and model updates, making controlled testing unreliable.

Gap | Mentions |

|---|---|

Prompt volume data is unreliable or fabricated | 50% (11) |

No way to know what prompts users actually enter | 36% (8) |

Can’t prove causation between changes and visibility | 27% (6) |

LLM ranking/retrieval factors are opaque | 23% (5) |

Personalization makes consistent measurement impossible | 18% (4) |

Best practices are unproven hypotheses | 14% (3) |

“I don’t see how right now a tool can provide [accurate prompt volume data]… We don’t know. It’s very new. Things are changing so fast.”

Are you using an AI analytics or visibility tool?

Tool usage | Agency | Freelance | In-house |

|---|---|---|---|

Dedicated AI visibility tool | 27% | 17% | 0% |

SEO platform with AI features | 27% | 33% | 40% |

Manual tracking only | 18% | 17% | 40% |

Evaluating various tools | 18% | 33% | 0% |

Not using or evaluating | 9% | 0% | 20% |

Tool adoption is fragmented and tentative. No single platform has emerged as the industry standard. The most common pattern is triangulation, meaning respondents are using multiple tools and cross-referencing outputs due to distrust in any single data source. Several practitioners described building custom Looker Studio dashboards pulling GA4 referral data as a stopgap.

Dedicated AI visibility tools (Profound, PeecAI, AHREFS Brand Radar) are gaining traction among agencies, though cost and data accuracy concerns limit adoption. Semrush was the most frequently mentioned platform but drew the most skepticism, particularly around its AI features being “bolted on” to an SEO-first architecture.

In-house teams lag behind, relying primarily on manual spot-checks and SEO platforms rather than dedicated AI visibility tools.

“There is no ChatGPT search console. So right now, none of the LLMs provide that data… [A] specific prompt cannot have 700k volume. That’s impossible.”

If a client asked for a report on their AI visibility today, walk me through the specific steps your team would take to create it manually. How long would that take?

The absence of standardized workflows reflects the nascent state of GEO as a practice area. Practitioners are improvising with a patchwork of SEO tools, custom dashboards, and manual spot-checks. Several respondents expressed frustration at the time required to blend multiple data sources into a coherent narrative.

The lack of process consistency also creates a barrier to scaling GEO services or training junior staff, as there is no established playbook to follow.

No interviewee provided a specific time estimate for manual reporting. When directly asked, one respondent pivoted to questioning whether they even produce AI-specific reports at all.

Author’s note: For reference, Gander’s internal manual audit process, prior to platform development, required approximately 12 hours per client to compile a comprehensive baseline report.

The absence of a standardized playbook is an invitation for experimentation and, in my opinion, offers a key moment to level up as a search professional. GEO is where SEO was in the early 2000s: unrefined, unregulated, and full of opportunity for practitioners willing to experiment. Those who develop and document repeatable workflows now could shape how the industry operates for the next decade.

We encourage practitioners to treat this ambiguity as a competitive advantage: test methodologies, share findings, and collaborate to build an industry playbook.

Where do you feel the industry narrative around GEO is overblown, misleading, or unclear?

Search professionals are generally skeptical of the broader GEO narrative. The most frequently rejected claim was “SEO is dead,” dismissed by nearly every respondent. Claims that traditional search optimization is no longer relevant are viewed as misleading; instead, technical fundamentals such as server-side rendering, structured markup, and entity clarity are viewed as increasingly relevant for AI discoverability. Specifics included:

- Server-side rendering (SSR): Multiple practitioners noted that AI agents cannot yet render JavaScript, making SSR essential for content to be indexed.

- Structured markup and schema: Academic research (UC Berkeley’s GEO16 framework <link>) confirms that structured content causes higher retrieval rates. Some practitioners in mid-to-late 2025 were making claims about schema’s relevance in content being retrieved. Early experiments were poorly designed, including removing any and all content from the public facing site. <maybe unclear?>

- WordPress JSON endpoints: One practitioner discovered ChatGPT actively crawling wp-json endpoints, suggesting WordPress sites have an inherent advantage for AI indexing.

- LLMs.txt: Despite industry buzz and Yoast’s early adoption, practitioners report this protocol remains unproven, with log files showing minimal crawler activity.

Multiple interviewees compared the current GEO landscape to SEO circa 2004: full of self-proclaimed experts, unverifiable claims, and snake oil vendors.

A secondary concern was the inflation of case study results. Practitioners noted that percentage-based gains are easily manipulated (“four-figure increases” from a baseline of one) and rarely include methodology or reproducibility.

The “GEO is just SEO” narrative, promoted by some prominent industry voices, was also challenged. Respondents acknowledged significant overlap but rejected the claim as an oversimplification that ignores meaningful technical, content-related, and strategic differences.

A handful of respondents expressed concern that GEO tactics “feel reminiscent of old SEO black hat days,” particularly aggressive Reddit campaigns and content manipulation strategies.

The industry perceives the narrative around return on investment to be unclear. While a citation in a summary might be seen as beneficial for brand reputation and top-of-funnel awareness, proving a specific financial return compared to a direct organic click remains an analytical challenge. <quotes>

“[What] we probably are lacking the most as an industry is solid case study worthy work that can instill trust in other agencies… and C-suite decision makers who are willing to invest in this. So I think that’s probably where [the] snake oil is coming from.”

Author’s Note

After speaking with a small cohort of search professionals on the evolution of search, there seems to be a common sentiment—we’re in the wild west again. While I may love the chaos and opportunity a new space provides, it’s clear that many feel quite differently. You only need browse your Linkedin feed to see the pendulum swing from opportunism and ostriching. Bold (and nonsensical) claims like SEO is DEAD are ridiculous. GEO is SEO is not quite as silly, but certainly unfounded—at least based on personal experience and the academic research I’ve reviewed. Like any new space, there will be the purported gurus and “experts”. Let them have their 15 minutes. Search and discovery have changed fundamentally. Our skill sets will need to shift commensurately or we become less relevant.

Rather than offer prescriptive advice that may be irrelevant two months from now, I would pose four questions practitioners may want to answer for their own clients and organizations:

How do your AI search results compare to your organic rankings?

If you rank well on Google but don’t appear in ChatGPT, Gemini, Claude, or Perplexity responses, that gap is worth investigating. The technical and content factors aren’t identical, but they will overlap.

What are competitors doing technically that you’re not?

When a competitor gets cited and you don’t, look at their markup, their third-party mentions, their content structure. The answers are often mundane: SSR, schema, Wikipedia presence, or generally great PR.

What’s the cost when AI gets your brand wrong?

Misattribution, outdated information, and hallucinations happen. For some brands, this is a minor nuisance. For others, like healthcare, finance, legal, government, it’s a material risk worth quantifying.

Are you being cited, or is somewhere else being cited while talking about you?

Several practitioners discovered their visibility was actually going to third-party directories or competitors who mentioned them. High visibility doesn’t mean much if the citation links elsewhere.

Takeaways for Continued Research

This report captures a snapshot of Q4 2025 into early 2026. The landscape is shifting fast enough that several findings may require revisiting within months. A few areas warrant closer investigation:

Prompt volume methodology

Every practitioner we spoke with distrusts current prompt volume data. No platform has solved this with any level of accuracy—including Gander. SEMRush has outright specified their volumes are meant for “directional” purposes. Also, LLM providers show no indication of releasing first-party data. Until that changes, any research claiming statistical significance on prompt volumes should be viewed skeptically. The same goes for any AI visibility or analytics platform.

From academic to applied

Frameworks, like GEO16, have demonstrated links between specific optimizations and retrieval rates in controlled experiments. The gap is in translating those findings into repeatable workflows that practitioners can run, at scale, on real client sites. Bridging that gap is where the industry needs to focus.

The ROI gap

Citations and mentions are not clicks. The financial value of appearing in an AI-generated response, versus a traditional organic result, remains debatable. Workflows in Looker Studio and Google Analytics are part of the puzzle.

Platform divergence

ChatGPT, Gemini, Perplexity, and Claude retrieve and synthesize differently. Early findings suggest meaningful variation in how each weights sources, handles brand disambiguation, and display citations. Platform-specific optimization strategies may emerge, or the platforms may converge. Your guess is as good as mine.

Would you like to help shape the next report?

We’re planning Q2 2026 research and want to hear from you. What questions are you struggling to answer? What would make this research more useful for your work?

Reach out via Linkedin or fill out the form below.

About the Author: Adam Malamis

Adam Malamis is Head of Product at Gander, where he leads development of the company's AI analytics platform for tracking brand visibility across generative engines, like ChaptGPT, Gemini, and Perplexity.

With over 20 years designing digital products for regulated industries including healthcare and finance, he brings a focus on information accuracy and user-centered design to the emerging field of Generative Engine Optimization (GEO). Adam holds certifications in accessibility (CPACC) and UX management from Nielsen Norman Group. When he's not analyzing AI search patterns, he's usually experimenting in the kitchen, in the garden, or exploring landscapes with his camera.